The Backstory

In late 2006, I began to notice that I was accumulating a lot of data on a lot of different hard drives. After a catastrophic disk failure on one of my systems, I decided that it was time to invest in a RAID-based storage solution. Being me, the decision came down to buy vs. build. My free time was about to evaporate, as we were preparing for the birth of our first child, so I opted to buy, hoping that the expandable RAID solution of the Infrant ReadyNAS NV+ would save me build, configuration and management time. It did! It was a great solution.

Fast forward through 3 complete (and a fourth, almost complete) disk set replacements, a power supply replacement in 2011 and a fan replacement in early 2020. I was running out of space, and the device didn’t have official support for individual drives larger than 2TB according to my last research. I had also been receiving numerous SMART errors from one of the disks for years, even after replacing that very disk a couple times. To me, this suggested an issue with the NAS, as the disks always passed SMART tests on another machine.

My Use Case

In anticipation of a new NAS solution, I got to thinking about how I wanted all of this to work together. What was my workflow? Did cloud storage finally make financial sense? Were SSDs cost-effective yet? I had lots of questions. During this planning phase, I recalled that my friend, Ted, had told me about a good deal on cloud storage with OwnCube. They are a hosted NextCloud provider. They sometimes offer a one-time flat fee special for unlimited storage so long as you have at least their basic plan. This made economic sense for me, and I bit the bullet. This would solve the storage capacity problem. I also wanted to run a home media server (not from the NAS, necessarily), which would require relatively low latency access to my files. I decided that having a mirror of what was in my cloud storage would be a good solution to that problem.

The need for a simple local mirror of my data drastically changed my requirements for a NAS. I didn’t need to worry about protecting against multiple disk failures. Really, I wasn’t worried about disk failures at all, as it would only impact the local copy of my files. This eliminated the need for hot swap bays, and any RAID levels beyond RAID-1 (disk mirroring). In fact, I ended up not using RAID at all, but we’ll get to that later.

My requirements/wishlist ended up looking something like this:

- Relatively small form factor and quiet

- RAID-1 or equivalent storage protection (would like ZFS)

- Simple configuration

- Hot swap bays not necessary

- NextCloud sync

- Prioritize read speed over write speed

- Support NFS

So, my solution was fairly simple. I’d build a 2 disk mirror that would run the NextCloud client and sync all my files on a regular basis. How Hard Could it Be?™

In my research, I came across a variety of solutions that would meet my needs. So, I took the opportunity to try something new. PIne64 builds small, powerful ARM-based SBCs. I thought their RockPro64 would be a perfect fit for my new NAS. They even sell a NAS-friendly enclosure made specifically for the RockPro64. It helped sway my decision that Ted built the same NAS a few months prior and reported satisfactory results. So, I loaded up my cart with the recommended parts and waited for my kit to arrive from China.

The Build

Putting the physical pieces together is quite well-documented, so I won’t detail it here. Assembly time was probably under 30 min., though I didn’t time it. When I received the SBC, case, and other parts, I hadn’t received my hard drives yet, so I set up the system with a pair of older 2TB disks that were spares for or rejects from my old NAS. It wasn’t important that they be reliable since I really just wanted to test out a few things with them. In fact, I ignored the errors that I saw, chalking them up to bad disks (spoiler: it ended up being a problem with the SATA controller).

Since I only needed to purchase 3 disks (the third for backup/hot spare), I thought I should buy higher capacity disks. After uploading all my data to my NextCloud instance, it appeared that I needed a tad more than 4TB of storage. Thanks to my friend Brian’s blog post, I decided to try shucking some low-cost 8TB external drives. They happened to be on sale for less than buying a bare internal drive of the same capacity. In my research I came across a technology called SMR. The big drawback with SMR drives is that write speeds are particularly slow, though read speeds are not affected. For my use case, this would not be an issue, so I didn’t have a problem if my shucked drives turned out to use SMR technology. Once I received my drives, I followed instructions from a few videos specific to my model and found that the drives inside were not SMR drives as far as my research revealed.

I removed the 2TB drives and swapped in the 8TB drives. This took several minutes since the NAS case doesn’t have hot-swap drive bays. The first thing I noticed on power-up was that the new drives were much quieter than the old ones. Pleased with that discovery, I set about configuring the software.

Configuration

My goal was to keep the system setup as simple and “stock” as possible. In the event of a disastrous failure, I wanted to be able to get back up and running as quickly as possible.

Selecting an OS

The first OS/distribution I tried was OpenMediaVault. I downloaded the RockPro64 bootable image and wrote it to a microSD card using Etcher. I really wanted this to “Just Work”, because it would save me a lot of time and effort. The only thing really missing in my mind was ZFS support. Since this NAS would simply be a mirror of my cloud storage, I was willing to sacrifice ZFS support for ease of administration. Unfortunately, I couldn’t get OMV to work. It booted up just fine, but I was unable to get the web-based configuration tool to successfully store a static IP address. I decided to try setting static IP information from the console, but that failed as well. They have a convenient command line utility for configuration, but for some reason, it failed and exited when I tried to set the IP address. So, rather than try to debug this, I decided to move on and try the Ubuntu 18.04-based ayufan image. I was able to get this up and running with no issues.

Building the ZFS Kernel Module

The wrinkle here is that Ubuntu 18.04 doesn’t have a ZFS module by default. So, I’d need to build and install the kernel module myself. I know I said I wanted to keep things stock and simple, but this is the one area where I strayed from that principle. And, having done it now, it wasn’t so bad. To get started, Ted pointed me to this forum post, and pointed out that step 3 contained the key elements in getting ZFS built. The steps I completed were as follows (I have not gone back and rebuilt from scratch after I got this working, so I can’t promise this is 100% accurate):

- install python 2 (may not be necessary)

- install dkms

- install spl

- sudo apt-get source zfs-linux

- sudo apt install zfs-dkms

- sudo dkms build zfs-linux/0.7.5( this succeeded and then sudo modprobe zfs succeeded)

- lsmod shows it installed

Creating a ZFS Mirror Pool

Now that I had a working ZFS kernel module installed, I did a bit of research on ZFS pools. I discovered that I didn’t need to use RAIDZ2, I could simply create a ZFS mirror pool. I followed these instructions to create my mirror pool. The big advantage I saw in the simple mirror pool was that maintaining it appeared very easy. Disks can be added and removed from the pool. It’s even possible to add a disk, allow it to make a copy of the pool data (resilvering in ZFS parlance), and then remove the disk. This could be used as a makeshift backup in a pinch.

Fan Control

The fan that came from Pine64 connected directly to the SBC via a 2-pin connector, and is PWM-controlled. I tried setting the fan manually according to this forum post. However, my fan didn’t move. After an embarrassingly long time of trying to get this to work, I finally took the case off to check that the connector was properly attached and realized that one of the SATA cables was fouling the fan blades. I tucked the cable away from the fan with a good clearance, verified that the cable was connected properly, and tried manually setting the fan on. It worked. In my debugging of this issue, I came across a tool called ATS and installed it. The install was a tad convoluted, but it worked. There is a config file to control threshold temperatures, and it runs as a systemd service.

NextCloud Client

Syncing to my cloud provider is the key function for this entire build. I found that there is a command line option for starting a single sync action using the nextcloudcmd command. Unfortunately, this requires installing the entire NextCloud client, which isn’t onerous, but includes a lot of GUI libraries that seem unnecessary. I’m also not a huge fan of adding PPAs willy-nilly, but this is the major feature I wanted for my NAS, so I made the exception.

At some point in the future, I will create a simple systemd service that runs the nextcloudcmd command every 5 minutes. It will first check to see if the script is already running. If it is not running it will execute the command, performing a 2-way sync.

SMART Monitoring

I also installed the smartmontools package that allows execution of SMART tests and fetches disk status. I may create a weekly report that gets mailed to me if I get around to it.

UPS Monitoring

My old NAS had a UPS monitoring feature, so I wanted to keep that. I installed the apcupsd package, but I still need to set the threshold times for shutdowns. This should also alert other systems to shutdown as well, but I haven’t come up with a good solution for that yet.

Filesharing via NFS

On my network, all of my hosts support NFS, so that is the only remote filesystem I set up.

Performance

My old NAS was abysmally slow, and I was hoping that the new NAS would far outpace it in performance. I was not disappointed! I use iperf3 to test out basic network performance. The NIC on the RockPro64 was able to achieve between 937 and 943 Mbps in either direction.

During file transfers, I regularly see about 54MB/sec (432mbps) with large files through the Gnome Files GUI.

I realize that these are not exhaustive results, but they are indicative of what I’ve seen over the last few months of using the NAS, and give a rough idea of what to expect from this configuration. These are much better numbers than anything I ever saw on my old ReadyNAS.

Wrap-up

So, that’s all the basic details for the build and configuration of my Pine64 NAS. I’m really pleased with the performance of the system, and the build quality of the NAS enclosure and the RockPro64 SBC. I’d definitely recommend the Pine64 NAS if your use case is like mine. The only thing that gave me problems was the PCIe SATA controller I purchased from the Pine Store. If you’re not interested in the gory details of that “adventure”, you can stop reading here. Otherwise, this is a good place to talk about …

Trouble in Paradise

Well, it sounds like everything went swimmingly, doesn’t it? If you’ve made it this far, you’re looking for the ugly truth. Remember those disk errors I ignored at the top of this post? Those should have been a big, fat warning. Unfortunately, I dismissed them as problems with the old disks.

The first symptoms that appeared were

- Sudden CKSUM errors on one of the disks in the ZFS mirror (/dev/sdb), resulting in a “degraded” state, and then an “offline” state, indicating that the disk was unavailable. The disk was subsequently automatically removed from the mirror by ZFS.

- When the disk became “unavailable” in the pool, smartctl was unable to read SMART data from the disk

- Unexpected system reboots, usually during large file transfers

Rebooting and resilvering the mirror usually resulted in a repaired mirror, but it wouldn’t be long (24-48hrs) before the mirror failed again. The errors occured on both the original 2TB disks as well as the new 8TB disks, and usually affected the same device, /dev/sdb.

The problem(s) had to stem from one (or more) of the following components

- SATA cables

- Disk drives

- Heat

- Power supply

- My custom-built ZFS kernel module

- SATA controller

So, I set about to isolate each of the components to determine where the issue cropped up. The simplest and least expensive thing to check was the SATA cables.

SATA Cables

First, I swapped the cables with each other to see if the errors followed the “bad” cable. After swapping the cables, I rebuilt the mirror pool and started the NextCloud sync. Errors still appeared on /dev/sdb

To me, that result supported the notion that a bad cable was not at fault.

Next, using the “bad” 2TB drive (/dev/sdb) that reported errors earlier, I

- Connected the disk to a SATA-to-USB adapter using the “bad” SATA cable from the new NAS enclosure and connected it to another computer

- Ran short and long SMART tests on it

- Created a ZFS pool with the single disk

- Copied over 100 GiB of large files (DVD ISO images and .mkv files) to the pool

- Ran zpool scrub on the pool

No errors!

Then, I Connected the “good” 2TB disk (/dev/sda) to the same SATA cable and external adapter and repeated the above steps.

No errors.

Next, I connected the “bad” 8TB disk (also /dev/sdb) to the setup and repeated the steps.

No errors.

In my mind, this effectively eliminated both the “bad” cable and the “bad” disk as the source of errors, since I could write a large amount of data using the “bad” cable and “bad” disk combination on a different system.

Disk Drives

I read many years ago that some USB drive adapters don’t pass SMART data through to the host system properly. So, to more completely test the disks and eliminate this possibly false information, I used the power adapter from the SATA-to-USB adapter and connected a SATA-to-eSATA cable between the drive and the second computer, which has an eSATA port. I then ran steps 2-5 again on both 2TB disks and both 8TB disks. This configuration had the added benefit of isolating the disks from the potentially “bad” cable.

No errors.

Heat

Thinking through the past few days of experimentation, I recalled that when I did not limit the data rates for nextcloudcmd, the reboots occurred more frequently. So, I set the limits to 100mbps up and down and re-ran the sync. I noted that it took several hours longer for the reboots to occur. I also saw a few mentions in the Pine64 forums of the ASMedia asm1061-based SATA controller getting very hot. My controller was based on the asm1062 chip, but was functionally equivalent to the 1061. I noted that the chip got painfully hot to the touch, so I removed the cover of the NAS to allow more cool air access to the SATA controller and re-ran the sync. This appeared to gain a little more stable time before the CKSUM and SMART errors cropped up again. I decided to try attaching a small heat sink to the SATA controller chip. I ordered a few sets, rationalizing that if they didn’t solve my problem, I could just use them for my ever-expanding collection of Raspberry Pis. After attaching the heat sink, I performed a zpool scrub (~8hrs) and saw several CKSUM errors again, but no SMART errors. The mirror did not degrade this time. This looked promising! Until a few minutes later when the pool degraded and /dev/sdb was removed from the pool again.

By this time, I was quite frustrated, having spent the majority of a week tracking down this issue. But, I decided that this was an interesting enough problem to spend time on, so I persisted.

Power Supply

The next item to isolate was the power supply. Why the power supply? I began digging through the kernel log and saw lots of messages similar to the following:

Jul 1 16:04:17 gaia kernel: [87323.406416] ata2.00: exception Emask 0x1 SAct 0x60000000 SErr 0x0 action 0x0Jul 1 16:04:17 gaia kernel: [87323.407431] ata2.00: irq_stat 0x40000008

Jul 1 16:04:17 gaia kernel: [87323.408014] ata2.00: failed command: READ FPDMA QUEUED

Jul 1 16:04:17 gaia kernel: [87323.409076] ata2.00: cmd 60/00:e8:c8:57:ed/01:00:f3:02:00/40 tag 29 ncq 131072 in

Jul 1 16:04:17 gaia kernel: [87323.409076] res 40/00:f0:c8:58:ed/00:00:f3:02:00/40 Emask 0x1 (device error)

Jul 1 16:04:17 gaia kernel: [87323.411285] ata2.00: status: { DRDY }

A brief search revealed thisPine64 forum post, indicating that power might be the culprit. At this point, I didn’t have much to lose in trying, and it would eliminate one more potential source of the errors. The recommended power supply for the NAS case is rated at 12V and 5A, which should be plenty for 2 disks, the RockPro64 SBC and fan. I tested the output voltage of the power adapter with a multimeter and it read 12.3V, so the power supply appeared to be OK. For the next part of this test, I

- Powered one drive (/dev/sda) with the power connector included with the NAS enclosure (12V 5A)

- Powered the other disk with the SATA-to-USB adapter setup (the power adapter is physically separate from the USB connection)

My thinking was that this configuration would draw less current from the NAS power supply, potentially avoiding issues where the system wasn’t getting enough power, causing one disk to go offline. Even with this configuration, I still saw checksum errors and /dev/sdb became unresponsive to smartctl. So, this told me that it was less likely a power problem.

My Custom-built ZFS Kernel Module

Since the ZFS kernel module I installed wasn’t stock, I thought it would be worthwhile to try a stock kernel and prebuilt ZFS module. For this, I flashed another microSD card with the Armbian 20.04 Focal image, thinking there should be a kernel module and zfsutils-linux package. I got the image booted and tried installing the ZFS package, but it failed, complaining that the kernel module couldn’t be installed. Out of frustration, I took a simpler path. I decided to try creating the volumes using LVM and ext4. If i saw the same kernel errors, I knew the problem wasn’t isolated to the ZFS module. So, I set about creating physical and logical volumes. I wasn’t able to finish. During volume creation, the lvcreate command failed. I tried smartctl and /dev/sdb was unresponsive. The kernel log showed more of the now-familiar errors. OK. That ruled out my ZFS module.

SATA Controller

I had proven (to myself, at least) that none of the other components in the system were likely sources of the problems, so the only thing left to try was the SATA controller. The previously linked Pine64 forum post also mentioned some SATA control chips that were know to work with the RockPro64, so I found a reasonably priced card based on the Marvell 9215 chipset and ordered it.

When the new card arrived, I swapped out the card, powered up the NAS with the microSD card containing the ayufan 18.04 image and crossed my fingers. I recreated the ZFS mirror pool and began the NextCloud sync, monitoring the kernel log and the ZFS pool status. After a complete sync (about 3 days) and a zpool scrub…

No errors!

Well, that was painful. But, it was also informative and a lot of fun! Again, If you’re looking to build a simple NAS and your use case is similar to mine, I highly recommend the Pine64 NAS! But, don’t use an ASMedia asm1061 or asm1062-based SATA controller.

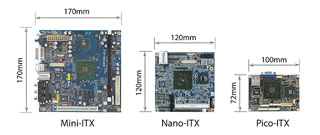

So the new Pico-ITX form factor reference design is targeted to consume about 1Watt of power under normal usage! Pretty amazing. This combined with RoHS compliance, I believe, are helping to push the industry toward lower and lower power consumption and better environmental impact.

So the new Pico-ITX form factor reference design is targeted to consume about 1Watt of power under normal usage! Pretty amazing. This combined with RoHS compliance, I believe, are helping to push the industry toward lower and lower power consumption and better environmental impact. Back in my college days (seems like ages ago now), I assembled my first PC. Ah, the memories. I hand-picked my components, trying to achieve the perfect balance of cheap, good, and fast. I had selected a motherboard and CPU combo with a 486 DX2-66MHZ processor, and 2 VLB slots and 6 ISA slots. I put in my 4 MB RAM from my first computer and lived with that for a while until I bit the bullet and added 4 MB and then another 4MB, eventually having 12MB RAM. I got a SoundBlaster AWE-32 which, at the time, was *the* top-of-the-line sound card. I also got a 2X Sony CDROM drive so I could play Myst. For the graphics card, I selected a 1MB VRAM STB Powergraph 24. 640×480@24-bit color, baby (my 14″ IBM monitor couldn’t handle resolutions above 640×480). For the communications (had to get on the ‘net!) I chose a Zoltrix 14400 model. This was a *huge* step up from my measly 2400baud modem that came with my PS/1.

Back in my college days (seems like ages ago now), I assembled my first PC. Ah, the memories. I hand-picked my components, trying to achieve the perfect balance of cheap, good, and fast. I had selected a motherboard and CPU combo with a 486 DX2-66MHZ processor, and 2 VLB slots and 6 ISA slots. I put in my 4 MB RAM from my first computer and lived with that for a while until I bit the bullet and added 4 MB and then another 4MB, eventually having 12MB RAM. I got a SoundBlaster AWE-32 which, at the time, was *the* top-of-the-line sound card. I also got a 2X Sony CDROM drive so I could play Myst. For the graphics card, I selected a 1MB VRAM STB Powergraph 24. 640×480@24-bit color, baby (my 14″ IBM monitor couldn’t handle resolutions above 640×480). For the communications (had to get on the ‘net!) I chose a Zoltrix 14400 model. This was a *huge* step up from my measly 2400baud modem that came with my PS/1.

Eventually, I upgraded, as the case had the worst arrangement for the drive cage. Any maintenance required disassembling the entire drive subsystem, which was a lot of work back in those days. I kept the old case lying around, and eventually revived it with old parts salvaged from discarded PCs (I believe it was a Pentium in the 150MHz range), and finally, I purchased overstock components and it lived out its last days as a 1GHz Celeron with 256MB RAM on an Epox baby AT motherboard (they actually made baby AT motherboards after the turn of the century!) The mobo/CPU/RAM live on in my current linux box, but that’s another story. As you can see, it also acquired a mass of stickers. The kana on the left side is the hiragana for my english name. I lovingly glued, X-acto knife’d out the excess, and taped over it with clear packing tape to preserve it for all time.

Eventually, I upgraded, as the case had the worst arrangement for the drive cage. Any maintenance required disassembling the entire drive subsystem, which was a lot of work back in those days. I kept the old case lying around, and eventually revived it with old parts salvaged from discarded PCs (I believe it was a Pentium in the 150MHz range), and finally, I purchased overstock components and it lived out its last days as a 1GHz Celeron with 256MB RAM on an Epox baby AT motherboard (they actually made baby AT motherboards after the turn of the century!) The mobo/CPU/RAM live on in my current linux box, but that’s another story. As you can see, it also acquired a mass of stickers. The kana on the left side is the hiragana for my english name. I lovingly glued, X-acto knife’d out the excess, and taped over it with clear packing tape to preserve it for all time.